You can load from multiple files by specifying a key prefix for the file In a later step, you compare the time difference between loadingįrom a single file and loading from multiple files. To demonstrate this principle, the dataįor each table in this tutorial is split into eight files, even though the filesĪre very small. The COPY command can load data from multiple files in parallel, which is muchįaster than loading from a single file. In this step, you use the CSV and NULL AS options to load the PART table. String that is enclosed in single quotation marks must not contain any Own Amazon Web Services account and IAM role. This step assumes the bucket and the cluster are in the same region.Īlternatively, you can specify the region using the REGION option with the COPY command. Name of a bucket in the same region as your cluster. To load the SSB tables, follow these steps: The command to each table demonstrates different COPY options and troubleshooting You use the following COPY commands to load each of the tables in the SSB schema. Tutorial, you use the following COPY command options and features:įor information on how to load from multiple files by specifying a key prefix, see Load the PART table using NULLįor information on how to load data that is in CSV format, see Load the PART table using NULLįor information on how to load PART using the NULL AS option, see Load the PART table using NULLįor information on how to use the DELIMITER option, see Load the SUPPLIER table usingįor information on how to use the REGION option, see Load the SUPPLIER table usingįor information on how to load the CUSTOMER table from fixed-width data, see Load the CUSTOMER table usingįor information on how to use the MAXERROR option, see Load the CUSTOMER table usingįor information on how to use the ACCEPTINVCHARS option, see Load the CUSTOMER table usingįor information on how to use the MANIFEST option, see Load the CUSTOMER table usingįor information on how to use the DATEFORMAT option, see Load the DWDATE table usingįor information on how to compress your files, see Load the LINEORDER table usingįor information on how to use the COMPUPDATE option, see Load the LINEORDER table usingįor information on how to load multiple files, see Load the LINEORDER table using You can specify a number of parameters with the COPY command to specify fileįormats, manage data formats, manage errors, and control other features. For more information about managing access, go to Managing access permissions to your Additional credentials are required if your data is encrypted.įor more information, see Authorization parameters in the COPY command To load data from Amazon S3, the credentials must include ListBucket and GetObject These credentials include an IAM role Amazon Resource Name (ARN). To access the Amazon resources that contain the data to load, you must provideĪmazon access credentials for a user with sufficient In these cases, you can use a manifestĮach load file and its unique object key. In others, you might need toĮxclude files that share a prefix. In some cases, you might need to load files with different prefixes, forĮxample from multiple buckets or folders. Prefix custdata.txt can refer to a single file or to a set of Uses to load all objects that share the key prefix. The object path is a key prefix that the COPY command Includes the bucket name, folder names, if any, and the object name. Object path for the data files or the location of a manifest file that explicitlyĪn object stored in Amazon S3 is uniquely identified by an object key, which Name of the bucket and the location of the data files. When loading from Amazon S3, you must provide the

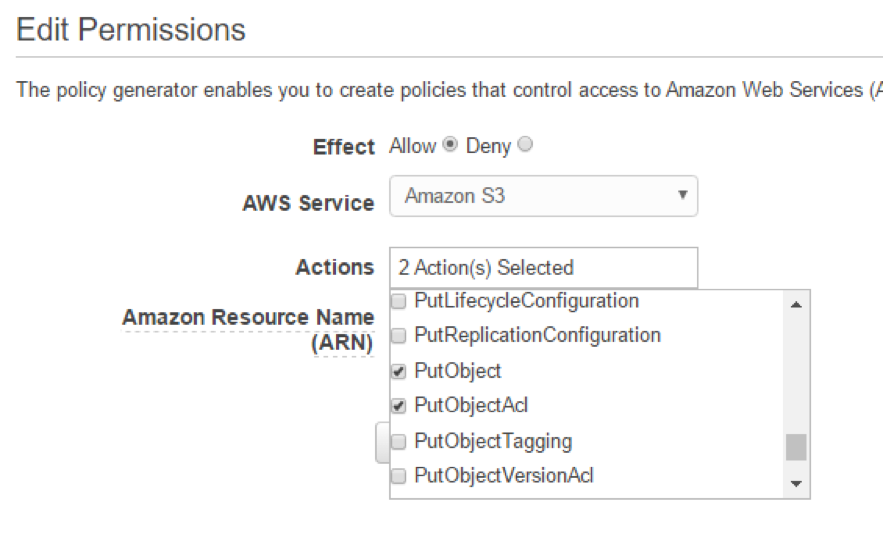

Load from data files in an Amazon S3 bucket. Remote host using an SSH connection, or an Amazon DynamoDB table. You can use the COPY command to load data from an Amazon S3 bucket, an Amazon EMR cluster, a For more information, seeĬolumn List in the COPY command reference. You don't use column lists in this tutorial. You can optionally specify a column list, that is aĬomma-separated list of column names, to map data fields to specific columns. Column listīy default, COPY loads fields from the source data to the table columns in New input data to any existing rows in the table. The table can be temporary or persistent. The table must already exist in theĭatabase. To run a COPY command, you provide the following values. COPY table_name FROM data_source CREDENTIALS access_credentials

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed